SUPERCOMPUTER

REMEMBER THE MOVIE 2001 AND HAL

Dee Finney's blog

start date July 20, 2011

today's date April 27, 2014

page 671

TOPIC: THE WORLD'S SUPER COMPUTER

NOTE: WHEN I FIRST HEARD ABOUT THIS SUPER COMPUTER - I THOUGHT -

CERTAINLY CHINA WOULD HAVE IT. THE UNITED STATES NO LONGER IS TOP DOG

INTERESTING VIDEO - HOWEVER - WHOEVER OPERATED THIS COMPUTER CAN'T SPELL.

https://www.youtube.com/watch?v=Vz7_oCrBzB4

The 'Unal Center of Education, Research & Development'

(UCERD) is based on the theme of carrying out research and education in the

areas of Computer Science, Electrical and Mechanical Systems. The mission of

UCERD is to investigate, develop and manage computer technology in order to

facilitate scientific progress. With this aim, special dedication has been

awarded to areas such as Computers, Telecomm and Mechanical Applications in

Science and Engineering. All these activities are complementary to each other

and very tightly related. The primary objective of UCERD is to build

multidisciplinary setup that facilitates industrial and scientific academic

practices to improve their understanding of needs and to help them in focusing

on the basic research.

The UCERD is further categorized into following groups:

Research

& Studies

UCERD research group motivates people to study confirmed facts and explore to

collect or view more knowledge and provide beneficial information that enrich

the mind. It continually upgrade knowledge and achieve improvement to what we

currently have to satisfy the human desire to "know".

The importance of research and knowledge in Islam can be determine by this fact

that the very first verses of Quran revealed to the Holy Prophet are about

research knowledge.

The Quran says: "Read! In the name of thy Lord and Cherisher

Who created. Created man out (mere) clot of congealed blood. Read! and thy Lord

is Most Bountiful. He Who taught (the use of) the Pen. Taught man that which he

knew not." The Quran asks: "Are those equal, those who know and those who do not

know? "

The Hadith (sayings of Prophet Peace be upon him) literature is also full of

references to the importance of knowledge. The Messenger of God (Peace be upon

him) said: 'Seek knowledge from the cradle to the grave.' The Prophet Muhammad

(Peace be upon him) said: 'Seeking of knowledge is the duty of every man and

woman.'

Projects

UCERD Project Channel provides practical experience using scientific method and

helps to stimulate interest in scientific inquiry. The outcome of a UCERD

professional and investigatory project involves a discovery that can improve

the lives of people and improve their life style.

Technology News Channel

As society is developing more, advance means of communication are required.

UCERD TechNews channel plays an important role to keep people informed about

latest technologies.

UCERD Team

UCERD is working and searching more collaboration between institutions, research

groups across different disciplines, between researchers from academia and

industry, between International and local organization. The Studies, Research &

Development carried out by the UCERD is organized in several research groups.

Their research and development work is published in international conferences

and journals.

UCERD People

UCERD Research & Development Team is finding friendly

technologies to fulfill human needs so that science and

humanity go on.

| Hussain Cheema |

hussain.tassadaq@gmail.com |

Tassadaq

Hussain received his Masters degree in Electronics

for Systems from the Institut supérieur

d’électronique de Paris in 2009. Currently he is

doing Ph.D. degree in Computer Architecture from UPC

. For the period of the PhD he is working as a

research student with the Computer Sciences group at

the Microsoft Barcelona Supercomputing Center (BSC).

The group is responsible to investigate and evolve

high performance computing systems for data and

compute bound applications. Mostly the group’s

research is focused on Shared Memory Multiprocessor

Architectures, Clustered Computing, Reconfigurable

Computing, Multi/Many Core Architectures,

Heterogeneous Architectures, Transactional Memories,

ISA (Instruction Set Architecture) virtualizations,

Microprocessor Micro-architectures and Software

Instrumentation & Profiling.

Tassadaq’s research focuses on studying the use of

accelerators for supercomputing purposes. The main

focus is to schedule multi-accelerator/vecotor

processor data movement in hardware. At the

theoretical level we are proposing novel

architectures for the acceleration of HPC

applications |

| Laeeq Ahmad |

laeeq@ucerd.com |

| Laeeq

earned a Bachelor of Science in Electrical

Engineering specialization in Telecommunication

Studies from Riphah International University. Laeeq

Ahmad has worked in Telecommunication Department

since 2005. In 2005 Laeeq enlisted into the Huawei

Telecom. He has been working for 7 years in several

leading and diverse organizations in senior

leadership positions.As Member of the Grop Lead his

responsibilities include, providing IT Management

Assurance and Knowledge Management |

| Faisal |

faysal@ucerd.com |

| Mr. Faisal

has experience of High speed and RF PCB designing

with Emphases of Signal and Power integrity, EMC and

Thermal analysis since 2004. He has experience on

designing closed loop servo motor control system for

robotic applications. |

|

Farooq Riaz Malhi |

Mobilink Pakistan

farooq.riaz@mobilink.net |

| Mr Farooq

Riaz is a Telecom Engineer of Mobilink Networks. He

got a bachelor’s degree in Electrical Engineering

with specialization in Electronics and

Communications in 2005. For last 5 years, he is in

the field of telecommunications. Currently he is

working as Team Lead (Fiber Optics Operations) in

PMCL-Mobilink Pakistan. He has extensive experience

of OSP issues and also had Network Management

training from Hauwei University, China. |

|

Safwan Mehboob Qureshi |

safwanmehboob@hotmail.com |

| Mr Safwan

has diverse experience of telecommunication, started

from the installation, upgrade and ended up in Level

3 & 4 support for TETRA communication. |

|

Uzma Siddique |

Researcher: Wireless

Comm, University of Manitoba, Canada

siddiquu@ee.umanitoba.ca |

| Uzma

expertise are in field of Telecommunications to

formulate joint scheduling and resource allocation

problems and power control problem and to design

procedures/algorithms for Multi-tier OFDMA based

networks Uplink and Downlink taking into account the

single Cell and Multicell scenarios. |

Supercomputer is

a computer at

the frontline of contemporary processing capacity – particularly speed of

calculation which can happen at speeds of nanoseconds.

Supercomputers were introduced in the 1960s, made initially and, for decades,

primarily by Seymour

Cray at Control

Data Corporation (CDC), Cray

Research and subsequent companies

bearing his name or monogram. While the supercomputers of the 1970s used only a

few processors,

in the 1990s machines with thousands of processors began to appear and, by the

end of the 20th century, massively

parallelsupercomputers with tens of thousands of "off-the-shelf" processors

were the norm. As of November 2013,

China's Tianhe-2 supercomputer

is the fastest

in the world at 33.86 petaFLOPS,

or 33.86 quadrillion floating point operations per second.

Systems with massive numbers of processors generally take one of two paths: In

one approach (e.g., in distributed

computing), a large number of discrete computers (e.g.,laptops)

distributed across a network (e.g., the Internet)

devote some or all of their time to solving a common problem; each individual

computer (client) receives and completes many small tasks, reporting the results

to a central server which integrates the task results from all the clients into

the overall solution. In another

approach, a large number of dedicated processors are placed in close proximity

to each other (e.g. in a computer

cluster); this saves considerable time moving data around and makes it

possible for the processors to work together (rather than on separate tasks),

for example in mesh and hypercube architectures.

The use of multi-core

processors combined with centralization is

an emerging trend; one can think of this as a small cluster (the multicore

processor in a smartphone, tablet,

laptop, etc.) that both depends upon and contributes to the

cloud.

Supercomputers play an important role in the field of computational

science, and are used for a wide range of computationally intensive tasks in

various fields, including quantum

mechanics, weather

forecasting, climate

research, oil

and gas exploration, molecular

modeling(computing the structures and properties of chemical compounds,

biological macromolecules,

polymers, and crystals), and physical simulations (such as simulations of the

early moments of the universe, airplane and spacecraft aerodynamics, the

detonation of nuclear

weapons, and nuclear

fusion). Throughout their history, they have been essential in the field of cryptanalysis.

History

The history of supercomputing goes back to the 1960s, with the Atlas at

the University

of Manchester and a series of

computers at Control

Data Corporation (CDC), designed

by Seymour

Cray. These used innovative designs and parallelism to achieve superior

computational peak performance.

The Atlas was

a joint venture between Ferranti and

the Manchester University and was designed to operate at processing speeds

approaching one microsecond per instruction, about one million instructions per

second. The first Atlas was

officially commissioned on 7 December 1962 as one of the world's first

supercomputers – considered to be the most powerful computer in the world at

that time by a considerable margin, and equivalent to four IBM

7094s.

The CDC

6600, released in 1964, was designed by Cray to be the fastest in the world

by a large margin. Cray switched from germanium to silicon transistors, which he

ran very fast, solving the overheating problem by introducing refrigeration. Given

that the 6600 outran all computers of the time by about 10 times, it was dubbed

a supercomputer and

defined the supercomputing market when one hundred computers were sold at $8

million each.

Cray left CDC in 1972 to form his own company. Four

years after leaving CDC, Cray delivered the 80 MHz Cray

1 in 1976, and it became one of

the most successful supercomputers in history. The Cray-2 released

in 1985 was an 8 processor liquid

cooledcomputer and Fluorinert was

pumped through it as it operated. It performed at 1.9 gigaflops and

was the world's fastest until 1990.

While the supercomputers of the 1980s used only a few processors, in the 1990s,

machines with thousands of processors began to appear both in the United States

and in Japan, setting new computational performance records. Fujitsu's Numerical

Wind Tunnelsupercomputer used 166 vector processors to gain the top spot in

1994 with a peak speed of 1.7 gigaflops per

processor. TheHitachi

SR2201 obtained a peak

performance of 600 gigaflops in 1996 by using 2048 processors connected via a

fast three-dimensionalcrossbar network.The Intel

Paragon could have 1000 to 4000 Intel

i860 processors in various

configurations, and was ranked the fastest in the world in 1993. The Paragon was

a MIMD machine

which connected processors via a high speed two dimensional

mesh, allowing processes to execute on separate nodes; communicating via the Message

Passing Interface.

Hardware and

architecture

A

Blue

Gene/L cabinet showing the stacked

blades,

each holding many processors

Approaches to supercomputer

architecture have taken dramatic

turns since the earliest systems were introduced in the 1960s. Early

supercomputer architectures pioneered by Seymour

Cray relied on compact innovative

designs and local parallelism to

achieve superior computational peak performance.However, in time the demand for

increased computational power ushered in the age of massively

parallelsystems.

While the supercomputers of the 1970s used only a few processors,

in the 1990s, machines with thousands of processors began to appear and by the

end of the 20th century, massively parallel supercomputers with tens of

thousands of "off-the-shelf" processors were the norm. Supercomputers of the

21st century can use over 100,000 processors (some being graphic

units) connected by fast connections.

Throughout the decades, the management of heat

density has remained a key issue

for most centralized supercomputers. The

large amount of heat generated by a system may also have other effects, e.g.

reducing the lifetime of other system components. There

have been diverse approaches to heat management, from pumping Fluorinert through

the system, to a hybrid liquid-air cooling system or air cooling with normal air

conditioning temperatures.

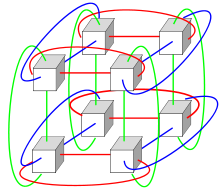

Systems with a massive number of processors generally take one of two paths. In

the grid

computingapproach, the processing power of a large number of computers,

organised as distributed, diverse administrative domains, is opportunistically

used whenever a computer is available. In

another approach, a large number of processors are used in close proximity to

each other, e.g. in acomputer

cluster. In such a centralized massively

parallel system the speed and

flexibility of the interconnect becomes very important and modern supercomputers

have used various approaches ranging from enhanced Infiniband systems

to three-dimensional torus

interconnects. The use of multi-core

processors combined with

centralization is an emerging direction, e.g. as in the

Cyclops64 system.

As the price/performance of general

purpose graphic processors (GPGPUs)

has improved, a number of petaflop supercomputers

such as Tianhe-I and Nebulae have

started to rely on them. However,

other systems such as the K

computer continue to use

conventional processors such as SPARC-based

designs and the overall applicability of GPGPUs in

general purpose high performance computing applications has been the subject of

debate, in that while a GPGPU maybe tuned to score well on specific benchmarks

its overall applicability to everyday algorithms may be limited unless

significant effort is spent to tune the application towards it. However,

GPUs are gaining ground and in 2012 the Jaguar

supercomputer was transformed

into Titan by

replacing CPUs with GPUs.

A number of "special-purpose" systems have been designed, dedicated to a single

problem. This allows the use of specially programmedFPGA chips

or even custom VLSI chips,

allowing better price/performance ratios by sacrificing generality. Examples of

special-purpose supercomputers include Belle, Deep

Blue, and Hydra, for

playing chess, Gravity

Pipe for astrophysics, MDGRAPE-3 for

protein structure computation molecular dynamics and Deep

Crack, for breaking the DES cipher.

Energy usage and

heat management

A typical supercomputer consumes large amounts of electrical power, almost all

of which is converted into heat, requiring cooling. For example, Tianhe-1A consumes

4.04 Megawatts of electricity. The

cost to power and cool the system can be significant, e.g. 4MW at $0.10/kWh is

$400 an hour or about $3.5 million per year.

Heat management is a major issue in complex electronic devices, and affects

powerful computer systems in various ways. The thermal

design power and CPU

power dissipation issues in

supercomputing surpass those of traditional computer

cooling technologies. The

supercomputing awards for green

computing reflect this issue.

The packing of thousands of processors together inevitably generates significant

amounts of heat

density that need to be dealt

with. The Cray

2 was liquid

cooled, and used a Fluorinert "cooling

waterfall" which was forced through the modules under pressure. However,

the submerged liquid cooling approach was not practical for the multi-cabinet

systems based on off-the-shelf processors, and in System

X a special cooling system that

combined air conditioning with liquid cooling was developed in conjunction with

the Liebert

company.

In the Blue

Gene system IBM deliberately used

low power processors to deal with heat density. On

the other hand, the IBM Power

775, released in 2011, has closely packed elements that require water

cooling. The IBM Aquasar system,

on the other hand uses hot water

cooling to achieve energy

efficiency, the water being used to heat buildings as well.

The energy efficiency of computer systems is generally measured in terms of

"FLOPS per Watt". In 2008 IBM's

Roadrunner operated at 376 MFLOPS/Watt. In

November 2010, the Blue

Gene/Q reached 1684 MFLOPS/Watt. In

June 2011 the top 2 spots on theGreen

500 list were occupied by Blue

Gene machines in New York (one

achieving 2097 MFLOPS/W) with the DEGIMA

cluster in Nagasaki placing third

with 1375 MFLOPS/W.

Software and

system management

Operating systems

Since the end of the 20th century, supercomputer

operating systems have undergone

major transformations, based on the changes insupercomputer

architecture. While early

operating systems were custom tailored to each supercomputer to gain speed, the

trend has been to move away from in-house operating systems to the adaptation of

generic software such as Linux.

Since modern massively

parallel supercomputers typically

separate computations from other services by using multiple types of nodes,

they usually run different operating systems on different nodes, e.g. using a

small and efficient lightweight

kernel such as CNK or CNL on

compute nodes, but a larger system such as a Linux-derivative

on server and I/O nodes.

While in a traditional multi-user computer system job

scheduling is in effect a tasking problem

for processing and peripheral resources, in a massively parallel system, the job

management system needs to manage the allocation of both computational and

communication resources, as well as gracefully dealing with inevitable hardware

failures when tens of thousands of processors are present.

Although most modern supercomputers use the Linux operating

system, each manufacturer has its own specific Linux-derivative, and no industry

standard exists, partly due to the fact that the differences in hardware

architectures require changes to optimize the operating system to each hardware

design.

Software tools

and message passing

Wide-angle view of the

ALMAcorrelator.

[65]

The parallel architectures of supercomputers often dictate the use of special

programming techniques to exploit their speed. Software tools for distributed

processing include standard APIssuch

as MPI and PVM, VTL,

and open

source-based software solutions such as Beowulf.

In the most common scenario, environments such as PVM and MPI for

loosely connected clusters and OpenMP for

tightly coordinated shared memory machines are used. Significant effort is

required to optimize an algorithm for the interconnect characteristics of the

machine it will be run on; the aim is to prevent any of the CPUs from wasting

time waiting on data from other nodes.GPGPUs have

hundreds of processor cores and are programmed using programming models such as CUDA.

Moreover, it is quite difficult to debug and test parallel programs. Special

techniques need to be used for

testing and debugging such applications.

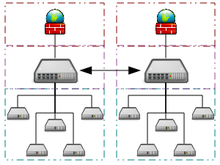

Distributed

supercomputing

Opportunistic approaches

Main article:

Grid

computing

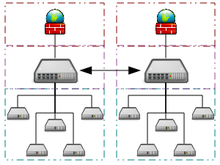

Example architecture of a

grid

computing system

connecting many personal computers over the internet

Opportunistic Supercomputing is a form of networked grid

computing whereby a “super

virtual computer” of many loosely

coupled volunteer computing

machines performs very large computing tasks. Grid computing has been applied to

a number of large-scale embarrassingly

parallelproblems that require supercomputing performance scales. However,

basic grid and cloud

computing approaches that rely on volunteer

computing can not handle

traditional supercomputing tasks such as fluid dynamic simulations.

The fastest grid computing system is the distributed

computing project Folding@home.

F@h reported 8.1 petaflops of x86 processing power as of March 2012. Of this,

5.8 petaflops are contributed by clients running on various GPUs, 1.7 petaflops

come from PlayStation

3 systems, and the rest from

various CPU systems.

The BOINC platform

hosts a number of distributed computing projects. As of May 2011, BOINC recorded

a processing power of over 5.5 petaflops through over 480,000 active computers

on the network[67] The

most active project (measured by computational power), MilkyWay@home,

reports processing power of over 700 teraflops through

over 33,000 active computers.

As of May 2011, GIMPS's distributed Mersenne

Prime search currently achieves

about 60 teraflops through over 25,000 registered computers. The Internet

PrimeNet Server supports GIMPS's

grid computing approach, one of the earliest and most successful grid computing

projects, since 1997.

Quasi-opportunistic approaches

Quasi-opportunistic supercomputing is a form of distributed

computing whereby the “super

virtual computer” of a large number of networked geographically disperse

computers performs huge processing power demanding computing tasks. Quasi-opportunistic

supercomputing aims to provide a higher quality of service than opportunistic

grid computing by achieving more

control over the assignment of tasks to distributed resources and the use of

intelligence about the availability and reliability of individual systems within

the supercomputing network. However, quasi-opportunistic distributed execution

of demanding parallel computing software in grids should be achieved through

implementation of grid-wise allocation agreements, co-allocation subsystems,

communication topology-aware allocation mechanisms, fault tolerant message

passing libraries and data pre-conditioning.

Performance measurement

Capability vs capacity

Supercomputers generally aim for the maximum in capability

computing rather than capacity

computing. Capability computing is typically thought of as using the maximum

computing power to solve a single large problem in the shortest amount of time.

Often a capability system is able to solve a problem of a size or complexity

that no other computer can, e.g. a very complex weather

simulationapplication.

Capacity computing in contrast is typically thought of as using efficient

cost-effective computing power to solve a small number of somewhat large

problems or a large number of small problems, e.g. many user access requests to

a database or a web site.Architectures that lend themselves to supporting many

users for routine everyday tasks may have a lot of capacity but are not

typically considered supercomputers, given that they do not solve a single very

complex problem.

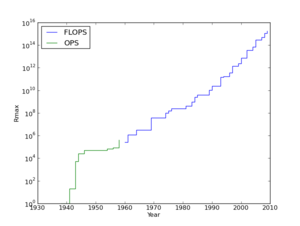

Performance metrics

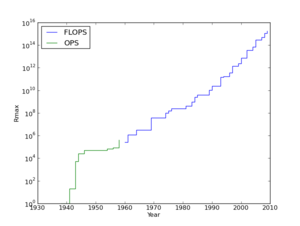

Top supercomputer speeds:

logscale speed over

60 years

In general, the speed of supercomputers is measured and benchmarked in

"FLOPS"

(FLoating Point Operations Per Second), and not in terms of MIPS,

i.e. as "instructions per second", as is the case with general purpose

computers. These measurements are

commonly used with an SI

prefix such as tera-,

combined into the shorthand "TFLOPS" (1012 FLOPS,

pronounced teraflops), or peta-,

combined into the shorthand "PFLOPS" (1015 FLOPS,

pronounced petaflops.) "Petascale"

supercomputers can process one quadrillion (1015)

(1000 trillion) FLOPS. Exascale is

computing performance in the exaflops range. An exaflop is one quintillion (1018)

FLOPS (one million teraflops).

No single number can reflect the overall performance of a computer system, yet

the goal of the Linpack benchmark is to approximate how fast the computer solves

numerical problems and it is widely used in the industry. The

FLOPS measurement is either quoted based on the theoretical floating point

performance of a processor (derived from manufacturer's processor specifications

and shown as "Rpeak" in the TOP500 lists) which is generally unachievable when

running real workloads, or the achievable throughput, derived from the LINPACK

benchmarks and shown as "Rmax" in

the TOP500 list. The LINPACK benchmark typically performs LU

decomposition of a large matrix.

The LINPACK performance gives some indication of performance for some real-world

problems, but does not necessarily match the processing requirements of many

other supercomputer workloads, which for example may require more memory

bandwidth, or may require better integer computing performance, or may need a

high performance I/O system to achieve high levels of performance.

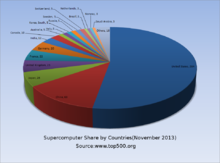

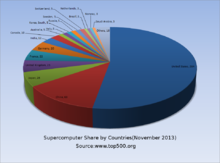

The TOP500 list

Pie chart showing share of supercomputers by countries from top 500

supercomputers as of November 2013

Since 1993, the fastest supercomputers have been ranked on the TOP500 list

according to their

LINPACK benchmark results. The

list does not claim to be unbiased or definitive, but it is a widely cited

current definition of the "fastest" supercomputer available at any given time.

This is a recent list of the computers which appeared at the top of the TOP500

list, and the "Peak speed" is given

as the "Rmax" rating. For more historical data see History

of supercomputing.

Applications of

supercomputers

The stages of supercomputer application may be summarized in the following

table:

The IBM Blue

Gene/P computer has been used to simulate a number of artificial neurons

equivalent to approximately one percent of a human cerebral cortex, containing

1.6 billion neurons with approximately 9 trillion connections. The same research

group also succeeded in using a supercomputer to simulate a number of artificial

neurons equivalent to the entirety of a rat's brain.

Modern-day weather forecasting also relies on supercomputers. The National

Oceanic and Atmospheric Administration uses

supercomputers to crunch hundreds of millions of observations to help make

weather forecasts more accurate.

In 2011, the challenges and difficulties in pushing the envelope in

supercomputing were underscored by IBM's

abandonment of the Blue

Waters petascale project.

Research and

development trends

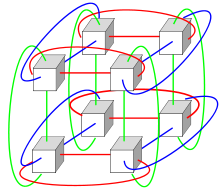

Diagram of a 3-dimensional

torus

interconnect used by

systems such as Blue Gene, Cray XT3, etc.

Given the current speed of progress, industry experts estimate that

supercomputers will reach 1 exaflops (1018,

one quintillion FLOPS) by 2018. China has stated plans to have a 1 exaflop

supercomputer online by 2018. Using

the Intel

MIC multi-core processor

architecture, which is Intel's response to GPU systems, SGI plans to achieve a

500-fold increase in performance by 2018, in order to achieve one exaflop.

Samples of MIC chips with 32 cores, which combine vector processing units with

standard CPU, have become available. The

Indian government has also stated ambitions for an exaflop-range supercomputer,

which they hope to complete by 2017.

Erik P. DeBenedictis of Sandia

National Laboratories theorizes

that a zettaflop (1021, one sextillion FLOPS)

computer is required to accomplish full weather

modeling, which could cover a two-week time span accurately.[87][not

in citation given] Such

systems might be built around 2030.

See also

Notes and references[edit]

-

Jump up^ "IBM

Blue gene announcement". 03.ibm.com. 2007-06-26.

Retrieved 2012-06-09.

-

^ Jump

up to:a b Hoffman,

Allan R.; et al. (1990). Supercomputers:

directions in technology and applications. National Academies.

pp. 35–47.ISBN 0-309-04088-4.

-

^ Jump

up to:a b Hill,

Mark Donald; Jouppi, Norman Paul; Sohi, Gurindar (1999). Readings

in computer architecture. pp. 40–49. ISBN 1-55860-539-8.

-

^ Jump

up to:a b Prodan,

Radu; Fahringer, Thomas (2007). Grid

computing: experiment management, tool integration, and scientific

workflows. pp. 1–4. ISBN 3-540-69261-4.

-

Jump up^ DesktopGrid

-

^ Jump

up to:a b Performance

Modelling and Optimization of Memory Access on Cellular Computer

Architecture Cyclops64 K

Barner, GR Gao, Z Hu, Lecture Notes in Computer Science, 2005, Volume

3779, Network and Parallel Computing, Pages 132–143

-

^ Jump

up to:a b Analysis

and performance results of computing betweenness centrality on IBM

Cyclops64 by Guangming

Tan, Vugranam C. Sreedhar and Guang R. Gao The

Journal of SupercomputingVolume 56, Number 1, 1–24 September 2011

-

Jump up^ Lemke,

Tim (May 8, 2013). "NSA

Breaks Ground on Massive Computing Center".

Retrieved December 11, 2013.

-

^ Jump

up to:a b Hardware

software co-design of a multimedia SOC platformby Sao-Jie Chen,

Guang-Huei Lin, Pao-Ann Hsiung, Yu-Hen Hu 2009 ISBN pages 70-72

-

Jump up^ The

Atlas, University of Manchester,

retrieved 21 September 2010

-

Jump up^ Lavington,

Simon (1998), A History of

Manchester Computers (2

ed.), Swindon: The British Computer Society, pp. 41–52,ISBN 978-1-902505-01-5

-

Jump up^ The

Supermen, Charles Murray, Wiley & Sons, 1997.

-

Jump up^ A

history of modern computing by

Paul E. Ceruzzi 2003 ISBN

978-0-262-53203-7 page

161 [1]

-

^ Jump

up to:a b Hannan,

Caryn (2008). Wisconsin

Biographical Dictionary. pp. 83–84. ISBN 1-878592-63-7.

-

Jump up^ John

Impagliazzo, John A. N. Lee (2004). History

of computing in education. p. 172. ISBN 1-4020-8135-9.

-

Jump up^ Richard

Sisson, Christian K. Zacher (2006). The

American Midwest: an interpretive encyclopedia. p. 1489. ISBN 0-253-34886-2.

-

Jump up^ Readings

in computer architecture by

Mark Donald Hill, Norman Paul Jouppi, Gurindar Sohi 1999 ISBN

978-1-55860-539-8 page

41-48

-

Jump up^ Milestones

in computer science and information technology by

Edwin D. Reilly 2003 ISBN

1-57356-521-0 page 65

-

^ Jump

up to:a b c Parallel

computing for real-time signal processing and control by

M. O. Tokhi, Mohammad Alamgir Hossain 2003 ISBN

978-1-85233-599-1 pages

201–202

-

Jump up^ "TOP500

Annual Report 1994". Netlib.org. 1996-10-01.

Retrieved 2012-06-09.

-

Jump up^ N.

Hirose and M. Fukuda (1997). "Numerical Wind Tunnel (NWT) and CFD

Research at National Aerospace Laboratory". Proceedings of HPC-Asia '97.

IEEE Computer Society.doi:10.1109/HPC.1997.592130.

-

Jump up^ H.

Fujii, Y. Yasuda, H. Akashi, Y. Inagami, M. Koga, O. Ishihara, M.

Syazwan, H. Wada, T. Sumimoto, Architecture and performance of the

Hitachi SR2201 massively parallel processor system, Proceedings of 11th

International Parallel Processing Symposium, April 1997, Pages 233–241.

-

Jump up^ Y.

Iwasaki, The CP-PACS project, Nuclear Physics B – Proceedings

Supplements, Volume 60, Issues 1–2, January 1998, Pages 246–254.

-

Jump up^ A.J.

van der Steen, Overview of recent supercomputers, Publication of the

NCF, Stichting Nationale Computer Faciliteiten, the Netherlands, January

1997.

-

Jump up^ Scalable

input/output: achieving system balance by

Daniel A. Reed 2003 ISBN

978-0-262-68142-1 page

182

-

Jump up^ Xue-June

Yang, Xiang-Ke Liao, et al in Journal of Computer Science and

Technology. "The

TianHe-1A Supercomputer: Its Hardware and Software". 26, Number 3.

pp. 344–351.

-

Jump up^ The

Supermen: Story of Seymour Cray and the Technical Wizards Behind the

Supercomputer by Charles

J. Murray 1997ISBN

0-471-04885-2 pages

133–135

-

Jump up^ Parallel

Computational Fluid Dyynamics; Recent Advances and Future Directions edited

by Rupak Biswas 2010 ISBN

1-60595-022-X page 401

-

Jump up^ Supercomputing

Research Advances by

Yongge Huáng 2008ISBN

1-60456-186-6 pages

313–314

-

^ Jump

up to:a b Computational

science – ICCS 2005: 5th international conference edited

by Vaidy S. Sunderam 2005 ISBN

3-540-26043-9 pages 60–67

-

Jump up^ Knight,

Will: "IBM creates world's most powerful computer",NewScientist.com

news service, June 2007

-

Jump up^ N.

R. Agida et al. (2005). "Blue

Gene/L Torus Interconnection Network | IBM Journal of Research and

Development" (PDF).Torus

Interconnection Network. 45, No 2/3 March–May 2005. p. 265.

-

Jump up^ Prickett,

Timothy (May 31, 2010). "Top

500 supers – The Dawning of the GPUs". =Theregister.co.uk.

-

Jump up^ Hans

Hacker et al in Facing the

Multicore-Challenge: Aspects of New Paradigms and Technologies in

Parallel Computing by

Rainer Keller, David Kramer and Jan-Philipp Weiss (2010). Considering

GPGPU for HPC Centers: Is It Worth the Effort?. pp. 118–121.ISBN 3-642-16232-0.

-

Jump up^ Damon

Poeter (October 11, 2011). "Cray's

Titan Supercomputer for ORNL Could Be World's Fastest". Pcmag.com.

-

Jump up^ Feldman,

Michael (October 11, 2011). "GPUs

Will Morph ORNL's Jaguar Into 20-Petaflop Titan". Hpcwire.com.

-

Jump up^ Timothy

Prickett Morgan (October 11, 2011). "Oak

Ridge changes Jaguar's spots from CPUs to GPUs". Theregister.co.uk.

-

Jump up^ Condon,

J.H. and K.Thompson, "Belle Chess Hardware", InAdvances in Computer

Chess 3 (ed.M.R.B.Clarke),

Pergamon Press, 1982.

-

Jump up^ Hsu,

Feng-hsiung (2002). Behind

Deep Blue: Building the Computer that Defeated the World Chess Champion. Princeton

University Press. ISBN 0-691-09065-3.

-

Jump up^ C.

Donninger, U. Lorenz. The

Chess Monster Hydra. Proc.

of 14th International Conference on Field-Programmable Logic and

Applications (FPL), 2004, Antwerp – Belgium, LNCS 3203, pp. 927 – 932

-

Jump up^ J

Makino and M. Taiji, Scientific

Simulations with Special Purpose Computers: The GRAPE Systems,

Wiley. 1998.

-

Jump up^ RIKEN

press release, Completion

of a one-petaflops computer system for simulation of molecular dynamics

-

Jump up^ Electronic

Frontier Foundation (1998). Cracking

DES – Secrets of Encryption Research, Wiretap Politics & Chip Design.

Oreilly & Associates Inc. ISBN 1-56592-520-3.

-

Jump up^ "NVIDIA

Tesla GPUs Power World's Fastest Supercomputer"(Press release).

Nvidia. 29 October 2010.

-

Jump up^ Balandin,

Alexander A. (October 2009). "Better

Computing Through CPU Cooling". Spectrum.ieee.org.

-

Jump up^ "The

Green 500". Green500.org.

-

Jump up^ "Green

500 list ranks supercomputers". iTnews

Australia.

-

Jump up^ Wu-chun

Feng (2003). "Making

a Case for Efficient Supercomputing | ACM Queue Magazine, Volume 1 Issue

7, 10-01-2003 doi 10.1145/957717.957772" (PDF).

-

Jump up^ "IBM

uncloaks 20 petaflops BlueGene/Q super". The Register. 2010-11-22.

Retrieved 2010-11-25.

-

Jump up^ Prickett,

Timothy (2011-07-15). "''The

Register'': IBM 'Blue Waters' super node washes ashore in August".

Theregister.co.uk. Retrieved

2012-06-09.

-

Jump up^ "HPC

Wire July 2, 2010". Hpcwire.com. 2010-07-02.

Retrieved 2012-06-09.

-

Jump up^ by

Martin LaMonica (2010-05-10). "CNet

May 10, 2010". News.cnet.com.

Retrieved 2012-06-09.

-

Jump up^ "Government

unveils world's fastest computer". CNN.

Archived from the

original on 2008-06-10.

"performing 376 million calculations for every watt of electricity

used."

-

Jump up^ "IBM

Roadrunner Takes the Gold in the Petaflop Race".

-

Jump up^ "Top500

Supercomputing List Reveals Computing Trends". "IBM... BlueGene/Q

system .. setting a record in power efficiency with a value of 1,680

Mflops/watt, more than twice that of the next best system."

-

Jump up^ "IBM

Research A Clear Winner in Green 500".

-

Jump up^ "Green

500 list". Green500.org.

Retrieved 2012-06-09.

-

^ Jump

up to:a b Encyclopedia

of Parallel Computing by David Padua 2011ISBN

0-387-09765-1 pages

426–429

-

Jump up^ Knowing

machines: essays on technical change by

Donald MacKenzie 1998 ISBN

0-262-63188-1 page

149-151

-

Jump up^ Euro-Par

2004 Parallel Processing: 10th International Euro-Par Conference 2004,

by Marco Danelutto, Marco Vanneschi and Domenico Laforenza ISBN

3-540-22924-8 pages 835

-

Jump up^ Euro-Par

2006 Parallel Processing: 12th International Euro-Par Conference,

2006, by Wolfgang E. Nagel, Wolfgang V. Walter and Wolfgang Lehner ISBN

3-540-37783-2 page

-

Jump up^ An

Evaluation of the Oak Ridge National Laboratory Cray XT3 by

Sadaf R. Alam etal International

Journal of High Performance Computing Applications February

2008 vol. 22 no. 1 52–80

-

Jump up^ Open

Job Management Architecture for the Blue Gene/L Supercomputer by Yariv

Aridor et al in Job

scheduling strategies for parallel processing by

Dror G. Feitelson 2005 ISBN ISBN

978-3-540-31024-2 pages

95–101

-

Jump up^ "Top500

OS chart". Top500.org. Retrieved

2010-10-31.

-

Jump up^ "Wide-angle

view of the ALMA correlator". ESO

Press Release. Retrieved 13

February 2013.

-

Jump up^ Folding@home:

OS Statistics. Stanford

University. Retrieved 2012-03-11.

-

Jump up^ BOINCstats:

BOINC Combined. BOINC.

Retrieved 2011-05-28Note this link will give current statistics,

not those on the date last accessed

-

Jump up^ BOINCstats:

MilkyWay@home. BOINC.

Retrieved 2011-05-28Note this link will give current statistics,

not those on the date last accessed

-

Jump up^ "Internet

PrimeNet Server Distributed Computing Technology for the Great Internet

Mersenne Prime Search". GIMPS.

Retrieved June 6, 2011.

-

^ Jump

up to:a b Kravtsov,

Valentin; Carmeli, David; Dubitzky, Werner; Orda, Ariel; Schuster,

Assaf; Yoshpa, Benny. "Quasi-opportunistic

supercomputing in grids, hot topic paper (2007)". IEEE

International Symposium on High Performance Distributed Computing.

IEEE. Retrieved 4 August 2011.

-

^ Jump

up to:a b c The

Potential Impact of High-End Capability Computing on Four Illustrative

Fields of Science and Engineering by

Committee on the Potential Impact of High-End Computing on Illustrative

Fields of Science and Engineering and National Research Council (October

28, 2008) ISBN

0-309-12485-9 page 9

-

Jump up^ Xingfu

Wu (1999). Performance

Evaluation, Prediction and Visualization of Parallel Systems.

pp. 114–117. ISBN 0-7923-8462-8.

-

^ Jump

up to:a b Dongarra,

Jack J.; Luszczek, Piotr; Petitet, Antoine (2003),"The

LINPACK Benchmark: past, present and future",Concurrency and

Computation: Practice and Experience (John

Wiley & Sons, Ltd.): 803–820

-

Jump up^ Intel

brochure – 11/91. "Directory

page for Top500 lists. Result for each list since June 1993".

Top500.org. Retrieved 2010-10-31.

-

Jump up^ "The

Cray-1 Computer System" (PDF).

Cray Research, Inc. Retrieved May 25,

2011.

-

Jump up^ Joshi,

Rajani R. (9 June 1998). "A

new heuristic algorithm for probabilistic optimization" (Subscription

required). Department of Mathematics and School of Biomedical

Engineering, Indian Institute of Technology Powai, Bombay, India.

Retrieved 2008-07-01.

-

Jump up^ "Abstract

for SAMSY – Shielding Analysis Modular System". OECD Nuclear Energy

Agency, Issy-les-Moulineaux, France.

Retrieved May 25, 2011.

-

Jump up^ "EFF

DES Cracker Source Code". Cosic.esat.kuleuven.be.

Retrieved 2011-07-08.

-

Jump up^ "Disarmament

Diplomacy: – DOE Supercomputing & Test Simulation Programme".

Acronym.org.uk. 2000-08-22. Retrieved

2011-07-08.

-

Jump up^ "China’s

Investment in GPU Supercomputing Begins to Pay Off Big Time!".

Blogs.nvidia.com. Retrieved

2011-07-08.

-

Jump up^ Kaku,

Michio. Physics

of the Future (New York:

Doubleday, 2011), 65.

-

Jump up^ "Faster

Supercomputers Aiding Weather Forecasts".

News.nationalgeographic.com. 2010-10-28.

Retrieved 2011-07-08.

-

Jump up^ Washington

Post August 8, 2011[dead

link]

-

Jump up^ Kan

Michael (2012-10-31). "China

is building a 100-petaflop supercomputer, InfoWorld, October 31, 2012".

infoworld.com. Retrieved 2012-10-31.

-

Jump up^ Agam

Shah (2011-06-20). "SGI,

Intel plan to speed supercomputers 500 times by 2018, ComputerWorld,

June 20, 2011". Computerworld.com.

Retrieved 2012-06-09.

-

Jump up^ Dillow

Clay (2012-09-18). "India

Aims To Take The "World's Fastest Supercomputer" Crown By 2017, POPSCI,

September 9, 2012". popsci.com.

Retrieved 2012-10-31.

-

Jump up^ DeBenedictis,

Erik P. (2005). "Reversible

logic for supercomputing". Proceedings

of the 2nd conference on Computing frontiers. pp. 391–402. ISBN 1-59593-019-1.

-

Jump up^ "IDF:

Intel says Moore's Law holds until 2029". Heise

Online. 2008-04-04.

The TOP500 project

ranks and details the 500 most powerful (non-distributed) computer systems

in the world. The project was started in 1993 and publishes an updated list of

the supercomputers twice

a year. The first of these updates always coincides with the International

Supercomputing Conference in

June, and the second one is presented in November at theACM/IEEE

Supercomputing Conference. The project aims to provide a reliable basis for

tracking and detecting trends in high-performance computing and bases rankings

on HPL, a portable implementation of

the high-performance LINPACK benchmark written

in Fortran for distributed-memory computers.

The TOP500 list is compiled by Hans

Meuer of the University

of Mannheim,Germany, Jack

Dongarra of the University

of Tennessee, Knoxville,

and Erich Strohmaier and Horst Simon of NERSC/Lawrence

Berkeley National Laboratory.

History

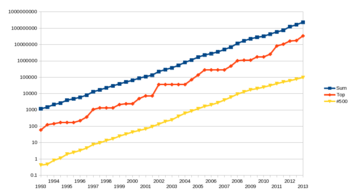

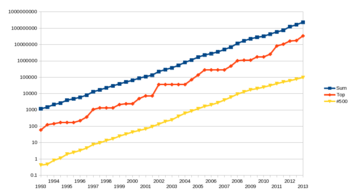

Rapid growth of supercomputers performance, based on data from

top500.org site. The logarithmic

y-axis

shows performance in GFLOPS. The red line denotes the fastest

supercomputer in the world at the time. The yellow line denotes

supercomputer no. 500 on TOP500 list. The dark blue line denotes

the total combined performance of supercomputers on TOP500 list.

In the early 1990s, a

new definition of supercomputer was needed to produce meaningful statistics.

After experimenting with metrics based on processor count in 1992, the idea

was born at the University

of Mannheim to use a detailed

listing of installed systems as the basis. Early 1993 Jack

Dongarrawas persuaded to join the project with his LINPACK

benchmark. A first test version was produced in May 1993, partially

based on data available on the Internet, including the following sources:

- "List of the World's Most Powerful

Computing Sites" maintained by Gunter Ahrendt

-

David Kahaner, the director of the Asian

Technology Information Program (ATIP), in

1992 had published a report titled "Kahaner

Report on Supercomputer in Japan" which

had an immense amount of data.

The information from

those sources was used for the first two lists. Since June 1993, the TOP500

is produced bi-annually based on site and vendor submissions only.

Since 1993, performance

of the #1 ranked position has steadily grown in agreement with Moore's

law, doubling roughly every 14 months. As of June 2013, the fastest

system, the Tianhe-2 with

Rpeak of 54.9024 PFlop/s,

is over 419,102 times faster than the fastest system in November 1993, the Connection

Machine CM-5/1024 (1024

cores) with Rpeak of 131.0 GFlop/s.

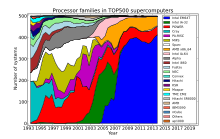

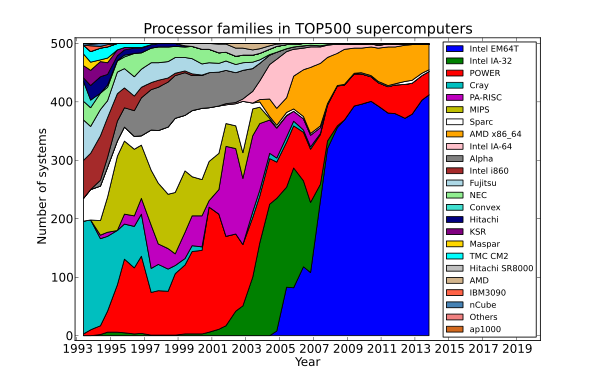

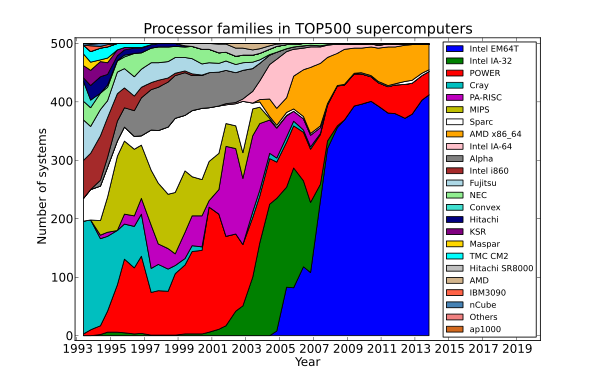

Architecture and operating systems

As of November 2013,

TOP500 supercomputers are overwhelmingly based on x86-64 CPUs (Intel EMT64 and AMD AMD64 instruction

set architecture), with the RISC-based Power

Architecture used by IBM

POWER microprocessors, and SPARC making

up the remainder. Prior to the ascendance of 32-bit x86 and

later 64-bit x86-64 in

the early 2000s,

a variety of RISC processor

families made up the majority of TOP500 supercomputers, including RISC architectures

such as SPARC, MIPS, PA-RISC and Alpha.

Share of processor architecture families in TOP500

supercomputers by time trend.

Top 10 ranking

Top 10 positions of the 42nd TOP500

on November 18, 2013

|

1 |

33.863

54.902 |

Tianhe-2 |

NUDT

Xeon E5–2692 + Xeon

Phi 31S1P, TH

Express-2 |

NUDT |

National Supercomputing Center in Guangzhou

China,

2013 China,

2013 |

Linux (Kylin) |

|

2 |

17.590

27.113 |

Titan |

Cray XK7

Opteron 6274 + Tesla

K20X, Cray Gemini Interconnect |

Cray |

Oak Ridge National Laboratory

United

States, 2012 United

States, 2012 |

Linux (CLE, SLESbased) |

|

3 |

17.173

20.133 |

Sequoia |

Blue Gene/Q

PowerPC A2, Custom |

IBM |

Lawrence Livermore National Laboratory

United

States, 2013 United

States, 2013 |

Linux (RHEL andCNK) |

|

4 |

10.510

11.280 |

K computer |

RIKEN

SPARC64 VIIIfx, Tofu |

Fujitsu |

RIKEN

Japan,

2011 Japan,

2011 |

Linux |

|

5 |

8.586

10.066 |

Mira |

Blue Gene/Q

PowerPC A2, Custom |

IBM |

Argonne National Laboratory

United

States, 2013 United

States, 2013 |

Linux (RHEL andCNK) |

|

6 |

6.271

7.779 |

Piz Daint |

Cray XC30

Xeon E5–2670 + Tesla

K20X, Aries |

Cray Inc. |

Swiss National Supercomputing Centre

Switzerland,

2013 Switzerland,

2013 |

Linux (CLE) |

|

7 |

5.168

8.520 |

Stampede |

PowerEdge C8220

Xeon E5–2680 + Xeon

Phi, Infiniband |

Dell |

Texas Advanced Computing Center

United

States, 2013 United

States, 2013 |

Linux |

|

8 |

5.008

5.872 |

JUQUEEN |

Blue Gene/Q

PowerPC A2, Custom |

IBM |

Forschungszentrum Jülich

Germany,

2013 Germany,

2013 |

Linux (RHEL andCNK) |

|

9 |

4.293

5.033 |

Vulcan |

Blue Gene/Q

PowerPC A2, Custom |

IBM |

Lawrence Livermore National Laboratory

United

States, 2013 United

States, 2013 |

Linux (RHEL andCNK) |

|

10 |

2.897

3.185 |

SuperMUC |

iDataPlex DX360M4

Xeon E5–2680, Infiniband |

IBM |

Leibniz-Rechenzentrum

Germany,

2012 Germany,

2012 |

Linux |

Legend:

- Rank – Position within the

TOP500 ranking. In the TOP500 List table, the computers are ordered

first by their Rmax value. In the case of equal performances (Rmax

value) for different computers, the order is by Rpeak. For sites that

have the same computer, the order is by memory size and then

alphabetically.

- Rmax – The highest score

measured using the LINPACK

benchmark suite. This is

the number that is used to rank the computers. Measured in quadrillions of floating

point operations per second,

i.e. petaflops.

- Rpeak – This is the theoretical

peak performance of the system. Measured in Pflops.

- Name – Some supercomputers are

unique, at least on its location, and are therefore christened by its

owner.

- Computer – The computing

platform as it is marketed.

- Processor cores – The number of

active processor

cores actively used

running LINPACK. After this figure is the processor

architectureof the cores named. If the interconnect between

computing nodes is of interest, it's also included here.

- Vendor – The manufacturer of

the platform and hardware.

- Site – The name of the facility

operating the supercomputer.

- Country – The country in which

the computer is situated.

- Year – The year of

installation/last major update.

- Operating system – The

operating system that the computer uses.

Other rankings

Top countries

Numbers below represent

the number of computers in the TOP500 that are in each of the listed

countries.

Systems ranked

#1 since 1993

- NUDT Tianhe-2A (

China,

June 2013 - present)

China,

June 2013 - present)

- Cray Titan (

United

States, November 2012 - June 2013)

United

States, November 2012 - June 2013)

- IBM Sequoia Blue

Gene/Q (

United

States, June 2012 – November 2012)

United

States, June 2012 – November 2012)

- Fujitsu K

computer (

Japan,

June 2011 – June 2012)

Japan,

June 2011 – June 2012)

- NUDT Tianhe-1A (

China,

November 2010 – June 2011)

China,

November 2010 – June 2011)

- Cray Jaguar (

United

States, November 2009 – November 2010)

United

States, November 2009 – November 2010)

- IBM Roadrunner (

United

States, June 2008 – November 2009)

United

States, June 2008 – November 2009)

- IBM Blue

Gene/L (

United

States, November 2004 – June 2008)

United

States, November 2004 – June 2008)

- NEC Earth

Simulator (

Japan,

June 2002 – November 2004)

Japan,

June 2002 – November 2004)

- IBM ASCI

White (

United

States, November 2000 – June 2002)

United

States, November 2000 – June 2002)

- Intel ASCI

Red (

United

States, June 1997 – November 2000)

United

States, June 1997 – November 2000)

- Hitachi CP-PACS (

Japan,

November 1996 – June 1997)

Japan,

November 1996 – June 1997)

- Hitachi SR2201 (

Japan,

June 1996 – November 1996)

Japan,

June 1996 – November 1996)

- Fujitsu Numerical

Wind Tunnel (

Japan,

November 1994 – June 1996)

Japan,

November 1994 – June 1996)

- Intel Paragon

XP/S140 (

United

States, June 1994 – November 1994)

United

States, June 1994 – November 1994)

- Fujitsu Numerical

Wind Tunnel (

Japan,

November 1993 – June 1994)

Japan,

November 1993 – June 1994)

- TMC CM-5 (

United

States, June 1993 – November 1993)

United

States, June 1993 – November 1993)

Number of systems

By number of systems as

of November 2013:

Large machines

not on the list

A few machines that have

not been benchmarked are not eligible for the list: such as NCSA's Blue

Waters. Additionally purpose-built machines that are not capable or do

not run the benchmark are not included: such as RIKEN

MDGRAPE-3.

See also[edit]

References

External links

China Still Has The World's Fastest Supercomputer

Earlier this week, the Top500 organization

announced its semi-annual list of the Top

500 supercomputers in

the world. And for the second year in a row, China’s Tianhe-2 is the

world’s fastest by a long shot, maintaining its performance of 33.86

petaflop/s (quadrillions of calculations per second) on the

standardized benchmark attached to every supercomputer on the list.

The

Tiahne-2 supercomputer. (Credit: National University of Defense

Technology)

In fact, the top 5 fastest supercomputers on the November 2013 list

are the same as the top 5 fastest supercomputers on the June 2013

list. The second fastest supercomputer in the world is Cray’s Titan

supercomputer at the Oak Ridge National Laboratory.

Rounding out the top five are IBM’s Sequoia supercomputer, RIKEN’s K

Computer in Japan, and IBM’s Mira supercomputer at the Argonne

National Laboratory.

The stability of the the top five is unique in the past few years,

which has seen several different computers being named the fastest,

while others moved down and up the ranks.

The Tianhe-2 was built by the National

University of Defense Technology in China. It has a total of

3,120,000 Intel processing cores, but also features a number of

Chinese built components and runs on a version of Linux

called Kylin, which was natively developed by the NUDT.

The newest entry into the top ten supercomputer list is number 6 on

the list, Piz Daint, a Cray system that has been installed at Swiss

National Supercomputing Centre. Piz Daint is now the fastest

supercomputer in Europe. It’s also the most energy efficient system

in the Top 10. That’s something to note, because one of the biggest

constraints on supercomputing is the sheer amount of power needed to

operate the systems.

“In the top 10, computers might hit a high mark,” Kai Dupke, a

senior product manager at SUSE Linux told me in a conversation about

the supercomputer race. “But in the usual operation, they operate

more slowly simply because it’s too expensive to run on full speed.”

Although China is still home to the world’s fastest supercomputer,

the United States continues to be the leader in high performance

computing. In June, the United States had 253 of the top 500 fastest

computers. In the November list, it has 265. China, on the other

hand, has 63 computers on the list, down from the 65 it had in June.

Follow me on Twitter or Facebook.

Read my Forbes blog here.

That new supercomputer is not your friend

China reclaims the fastest computer in the world prize. Get ready for even

better surveillance

ANDREW LEONARD

We learned this week that China has the fastest

supercomputer in the world, by a long shot. The Tianhe-2 is almost

twice as speedy as the previous record holder,

a U.S.-made Cray Titan.

Such news, by itself, isn’t particularly amazing.

It’s not even the first time a Chinese supercomputer has held the top

ranking. The Tianhe-1 grabbed the pole position in November 2010 and held it

until June 2011. Previously, Japan and the United States had traded

places since 1993. Supercomputing speed

follows roughly the same trajectory as Moore’s Law — it doubles about every

14 months. There will always be new contenders for the throne.

But this month, there’s a new context for news

about the debut of ever more powerful supercomputers. Consider the first

comment left on Reddit to a thread announcing the

exploits of Tianhe-2:

This would be a pretty awesome tool for churning through millions of

phone records and digital copies of people’s online data.

Haha. Funny. But not really. The not-so-subtle

implication of the constant expansion of supercomputing capacity is that, to

borrow a quote from Intel’s Raj Hazra that appeared in the

New York Times, “the insatiable need for

computing is driving this.” Big Data wants Big Computers.

Cyber Espionage: The Chinese Threat

Experts at the highest levels of government say it's the biggest

threat facing American business today. Hackers are stealing valuable

trade secrets, intellectual property and confidential business

strategies.

Government officials are calling it the biggest threat to America's

economic security. Cyber spies hacking into U.S. corporations' computer

networks are stealing valuable trade secrets, intellectual property data

and confidential business strategies. The biggest aggressor? China.

CNBC's David Faber investigates this new wave of espionage, which

experts say amounts to the largest transfer of wealth ever seen

—draining America of its competitive advantage and its economic edge.

Unless corporate America wakes up and builds an adequate defense

strategy, experts say it may be too late.

The Danger of Mixing Cyberespionage With Cyberwarfare

China has recently been accused of intense spying activity

in cyberspace, following claims that the country uses cyber

tactics to gain access to military and technological secrets

held by both foreign states and corporations. In this

context, the rhetoric of cyberwar has also raised its head.

Experts are questioning whether we are already at war with

China.

But a danger lies at the heart of the cyberwar rhetoric.

Declaring war, even cyberwar, has always had serious

consequences. Since war is acknowledged as the most severe

threat to the survival and well-being of society, war

rhetoric easily feeds an atmosphere of fear, provokes a rise

in the emergency level and instigates counter-measures. It

may also lead to an intensified cyber arms race.

It needs to be remembered that

cyber espionage does not equate to cyber warfare - and in

fact making such a link is completely unjustifiable.

Cyber espionage is an activity multiple actors resort to in

the name of security, business, politics or technology. It

is not inherently military. Espionage is finding information

that ought to remain secret and as such, may be carried out

for a range of different reasons and for varying lengths of

time.

Cyber espionage is an activity multiple actors resort to in the name of

security, business, politics or technology. It is not inherently

military. Espionage is finding information that ought to remain secret

and as such, may be carried out for a range of different reasons and for

varying lengths of time.

Conducting effective cyber espionage campaigns may in fact take years,

and results can be uncertain. However engaging in long-lasting cyberwar

activity is simply not sustainable as the costs of warfare are high, and

the motives very different; gaining information is never the prime

motive. War is waged in order to re-engineer the opposing society to

support one’s interests and values. This holds true for cyberwar as

well.

But that's not to say that the two concepts aren't inter-linked. Cyber

espionage can be utilised in warfare for preparing for war, as part of

intelligence efforts, and for preparing for peace. Plus, a long-lasting

spying campaign that eventually becomes detected may lead to war if it

is interpreted to justify pre-emptive or preventive actions.

However, the probability of cyberwar in the near future is low. What we

should be more concious of is the use of cyber in conventional

conflicts. Cyber capabilities are now classified as weapons; they are a

fifth dimension of warfare -- in addition to land, sea, air and space --

and whilst it’s unlikely future battles will be completely online, it is

difficult to imagine future wars or conflicts without cyber activities.

But even if the concept of war has become vague, espionage should be

regarded as a seperate activity in its own right. For actions to qualify

as war they should cause massive human loss and material damage --

cyberespionage does not do this. For the majority, there is little to be

gained from confusing cyberespionage with cyberwarfare, yet the

potential losses in the form of increasingly restricted freedom and

curtailed private space may be substantial.

The continued rapid growth of processing power brings with it enormous privacy

implications.Applications

such as facial recognition require massive computing power. Things that

aren’t quite possible today, like waving your phone at a stranger and

identifying them with NSA-like precision, will be child’s play tomorrow.

Encryption standards that we think can resist the toughest attacks right

now may wilt before the power of what’s around the corner. I used to

hear about new supercomputers and think about the inexorable march

forward into the science fiction future. Now I just think, oh great: the architecture

of surveillance just

got more fortified.

Read more: http://insights.wired.com/profiles/blogs/the-danger-of-mixing-cyberespionage-with-cyberwarfare#ixzz30BOzO65c

Follow us: @Wiredinsights

on Twitter | InnovationInsights

on Facebook

THE SECRET WAR

INFILTRATION. SABOTAGE. MAYHEM. FOR YEARS, FOUR-STAR GENERAL KEITH

ALEXANDER HAS BEEN BUILDING A SECRET ARMY CAPABLE OF LAUNCHING

DEVASTATING CYBERATTACKS. NOW

IT’S READY TO UNLEASH HELL.

INSIDE FORT MEADE, Maryland, a top-secret city

bustles. Tens of thousands of people move through more than

50 buildings—the city has its own post office, fire

department, and police force. But as if designed by Kafka,

it sits among a forest of trees, surrounded by electrified

fences and heavily armed guards, protected by antitank

barriers, monitored by sensitive motion detectors, and

watched by rotating cameras. To block any telltale

electromagnetic signals from escaping, the inner walls of

the buildings are wrapped in protective copper shielding and

the one-way windows are embedded with a fine copper mesh.

This is the undisputed domain of General Keith Alexander, a

man few even in Washington would likely recognize. Never

before has anyone in America’s intelligence sphere come

close to his degree of power, the number of people under his

command, the expanse of his rule, the length of his reign,

or the depth of his secrecy. A four-star Army general, his

authority extends across three domains: He is director of

the world’s largest intelligence service, the National

Security Agency; chief of the Central Security Service; and

commander of the US Cyber Command. As such, he has his own

secret military, presiding over the Navy’s 10th Fleet, the

24th Air Force, and the Second Army.

Alexander runs the nation’s cyberwar efforts, an empire he

has built over the past eight years by insisting that the

US’s inherent vulnerability to digital attacks requires him

to amass more and more authority over the data zipping

around the globe. In his telling, the threat is so

mind-bogglingly huge that the nation has little option but

to eventually put the entire civilian Internet under his

protection, requiring tweets and emails to pass through his

filters, and putting the kill switch under the government’s

forefinger. “What we see is an increasing level of activity

on the networks,” he said at a recent security conference in

Canada. “I am concerned that this is going to break a

threshold where the private sector can no longer handle it

and the government is going to have to step in.”

In its tightly controlled public relations, the NSA has

focused attention on the threat of cyberattack against the

US—the vulnerability of critical infrastructure like power

plants and water systems, the susceptibility of the

military’s command and control structure, the dependence of

the economy on the Internet’s smooth functioning. Defense

against these threats was the paramount mission trumpeted by

NSA brass at congressional hearings and hashed over at

security conferences.

But there is a flip side to this equation that is rarely

mentioned: The military has for years been developing

offensive capabilities, giving it the power not just to

defend the US but to assail its foes. Using so-called

cyber-kinetic attacks, Alexander and his forces now have the

capability to physically destroy an adversary’s equipment

and infrastructure, and potentially even to kill.

Alexander—who declined to be interviewed for this

article—has concluded that such cyberweapons are as crucial

to 21st-century warfare as nuclear arms were in the 20th.

And he and his cyberwarriors have already launched their

first attack. The cyberweapon that came to be known as

Stuxnet was created and built by the NSA in partnership with

the CIA and Israeli intelligence in the mid-2000s. The first

known piece of malware designed to destroy physical

equipment, Stuxnet was aimed at Iran’s nuclear facility in

Natanz. By surreptitiously taking control of an industrial

control link known as a Scada (Supervisory Control and Data

Acquisition) system, the sophisticated worm was able to

damage about a thousand centrifuges used to enrich nuclear

material.

The success of this sabotage came to light only in June

2010, when the malware spread to outside computers. It was

spotted by independent security researchers, who identified

telltale signs that the worm was the work of thousands of

hours of professional development. Despite headlines around

the globe, officials in Washington have never openly

acknowledged that the US was behind the attack. It wasn’t

until 2012 that anonymous sources within the Obama

administration took credit for it in interviews with

The New York Times.

But Stuxnet is only the beginning. Alexander’s agency has

recruited thousands of computer experts, hackers, and

engineering PhDs to expand US offensive capabilities in the

digital realm. The Pentagon has requested $4.7 billion for

“cyberspace operations,” even as the budget of the CIA and

other intelligence agencies could fall by $4.4 billion. It

is pouring millions into cyberdefense contractors. And more

attacks may be planned.

“WE JOKINGLY REFERRED TO HIM AS EMPEROR ALEXANDER, BECAUSE

WHATEVER KEITH WANTS, KEITH GETS.”

Inside the government, the general is regarded with a

mixture of respect and fear, not unlike J. Edgar Hoover,

another security figure whose tenure spanned multiple

presidencies. “We jokingly referred to him as Emperor

Alexander—with good cause, because whatever Keith wants,

Keith gets,” says one former senior CIA official who agreed

to speak on condition of anonymity. “We would sit back

literally in awe of what he was able to get from Congress,

from the White House, and at the expense of everybody else.”

Now 61, Alexander has said he plans to retire in 2014; when

he does step down he will leave behind an enduring legacy—a

position of far-reaching authority and potentially

Strangelovian powers at a time when the distinction between

cyberwarfare and conventional warfare is beginning to blur.

A recent Pentagon report made that point in dramatic terms.

It recommended possible deterrents to a cyberattack on the

US. Among the options: launching nuclear weapons.

FROM

http://www.wired.com/2013/06/general-keith-alexander-cyberwar/all/

|

THIS IS WHERE I POST WHAT I'M DOING AND THINKING

BLOG INDEX 2011

BLOG INDEX 2012 - page 1

JANUARY THRU APRIL 2012

BLOG INDEX 2012 - PAGE 2

MAY THRU AUGUST 2012

BLOG INDEX 2012 - PAGE 3

SEPTEMBER THRU DECEMBER

BLOG INDEX 2013

JAN, FEB, MAR, APR. 2013

BLOG INDEX - PAGE 2 - 2013

MAY, JUNE, JULY, AUGUST 2013

BLOG INDEX - PAGE 3 - 2013

SEPT, OCT, NOV, DEC, 2013

BLOG INDEX - PAGE 4 - 2014

JAN., FEB., MAR., APR. 2014

|